Introduction

In the grand tapestry of artificial intelligence (AI), large models shine like brilliant stars, illuminating the future of technology. They not only reshape our understanding of technology but also quietly trigger transformations across numerous industries. However, these intelligent technologies are not without risks and challenges. Here, we unveil the mysteries of large models, sharing their technologies and characteristics, analyzing their development and challenges, and providing a glimpse into the AI era.

Large models, such as the Generative Pre-trained Transformer (GPT) series, have achieved remarkable success in the field of Natural Language Processing (NLP), setting new performance benchmarks across various language tasks. Beyond language, large models also demonstrate significant advantages in image processing, audio processing, and physiological signals. They are rapidly applied in fields such as education, healthcare, and finance, particularly excelling in content generation. Today, many cutting-edge technologies related to large models are still in urgent need of development, while issues such as bias and privacy breaches also require resolution. This article analyzes the history and evolution of large models, explores frontier issues, and discusses future development directions, helping the public quickly understand large model technology and integrate into the advancing AI era.

![]()

Origins of Large Models

In November 2022, the renowned AI research company OpenAI released ChatGPT, an AI chatbot program based on the large language model GPT-3.5. Its fluent language expression capability, powerful problem-solving abilities, and vast database garnered widespread attention worldwide. Within less than two months of its launch, ChatGPT surpassed 100 million monthly active users, becoming the fastest-growing consumer application in history. Consequently, various industries began to feel the powerful influence of large models, sparking a research boom in large models both domestically and internationally.

The origins of large models can be traced back to the early AI research in the 20th century, which primarily focused on logical reasoning and expert systems. However, these methods were limited by hard-coded knowledge and rules, making it difficult to handle the complexity and diversity of natural language. With the advent of machine learning and deep learning technologies, along with rapid advancements in hardware capabilities, training large-scale datasets and complex neural network models became possible, ushering in the era of large models.

In 2017, Google’s introduction of the Transformer model architecture, which incorporated self-attention mechanisms, significantly enhanced sequence modeling capabilities, particularly in terms of efficiency and accuracy when handling long-distance dependencies. Subsequently, the concept of pre-trained language models (PLMs) gradually became mainstream. PLMs are pre-trained on large-scale text datasets to capture general patterns of language, and then fine-tuned for specific downstream tasks.

Evolution of Large Models

OpenAI’s GPT series models exemplify generative pre-trained models, representing the vanguard of this technology. From GPT-1 to GPT-3.5, each generation has seen significant improvements in scale, complexity, and performance. At the end of 2022, ChatGPT emerged as a chatbot capable of answering questions, generating articles, programming, and even mimicking human conversational styles. Its almost omnipotent answering ability provided a new understanding of the general capabilities of large language models, greatly advancing the field of NLP.

However, the development of large models is not limited to text. With technological advancements, multi-modal large models have begun to emerge, capable of simultaneously understanding and generating various types of data, including text, images, and audio. In March 2023, OpenAI announced the multi-modal model GPT-4, which added image functionality and exhibited more precise language comprehension, marking a significant transition from single-modal to multi-modal models. This essential difference in cross-modal data presents new and more complex requirements for the design and training of large models, while also introducing unprecedented challenges.

![]()

Characteristics of Large Models

Large models typically refer to machine learning models with vast parameter counts, especially in applications within NLP, computer vision (CV), and multi-modal domains. These models understand and learn human language through a pre-training approach, completing tasks such as information retrieval, machine translation, text summarization, and code generation through human-computer dialogue.

Parameter Count of Large Models

The parameter count of large models usually exceeds 1 billion, meaning that the model contains over 1 billion learnable weights. These parameters form the basis for the model’s learning and understanding of data, adjusting through training to better map input data to output results. The increase in parameter count is directly related to the model’s learning ability and complexity, enabling it to capture more subtle and deeper data features.

Types of Large Models

Large models can be classified based on their application domains and functions:

- Large Language Models: Focused on processing and understanding natural language text, commonly used for text generation, sentiment analysis, and question-answering systems.

- Visual Large Models: Specifically designed to process and understand visual information (such as images and videos), used for image recognition, video analysis, and image generation tasks.

- Multi-modal Large Models: Capable of processing and understanding two or more different types of input data (e.g., text, images, audio), executing more complex and comprehensive tasks by integrating information from different modalities.

- Base Large Models: Generally refer to models that can be widely applied to various tasks, learning a large amount of general knowledge during the pre-training phase without a specific application direction.

Capabilities of Large Models

The capabilities of large models lie in their ability to understand and process highly complex data patterns:

- Generalization Ability: Through pre-training on vast amounts of data, large models learn universal language rules, exhibiting strong generalization ability when facing new tasks.

- Deep Learning: The large parameter scale and deep network structure enable large models to establish complex abstract representations, understanding the deeper semantics and relationships behind the data.

- Context Understanding: In language models, large models can capture long-distance dependencies, enhancing their understanding of context, which is crucial for grasping subtle nuances in language.

- Knowledge Integration: Large models can integrate and utilize the knowledge learned during pre-training, sometimes exhibiting a degree of common-sense reasoning and problem-solving abilities.

- Adaptability: Although large models learn general knowledge during pre-training, they can adapt to specific tasks through fine-tuning, demonstrating high flexibility and adaptability.

![]()

Technologies Behind Large Models

Current large models are integrated machine learning models capable of processing various types of data. The foundational technologies within these large models aim to understand and generate information across different sensory modalities, enabling tasks such as image description, visual question answering, or cross-modal translation. Here are several key foundational technologies of large models.

Transformer Architecture

Most existing large models are built upon the Transformer model (or simply the decoder of the Transformer). This architecture captures global dependencies of input data through self-attention mechanisms and can also capture complex relationships between different modal elements. For instance, a multi-modal Transformer can simultaneously process the pixels of an image and the words of text, learning the associations between them through self-attention layers. This enables large models to understand various modalities such as text and images while generating long text sequences, maintaining contextual coherence.

Supervised Fine-tuning

Supervised fine-tuning (SFT) is a traditional fine-tuning method that continues training the pre-trained large model using labeled datasets. Notably, during the training of large models, the SFT phase typically uses high-quality datasets. Additionally, SFT involves adjusting the model’s parameters to enhance its performance on specific tasks. For example, to improve the model’s performance in legal consulting, a dataset containing legal questions and professional lawyer responses can be used for SFT. In SFT, the model typically attempts to minimize the difference between predicted outputs and true labels, often achieved through a loss function (such as cross-entropy loss). This method is straightforward and quick to adapt to new tasks; however, it has limitations due to its reliance on high-quality labeled data and may lead to overfitting on the training data.

Reinforcement Learning from Human Feedback

Reinforcement learning from human feedback (RLHF) is a more complex training method that combines elements of supervised learning and reinforcement learning. First, the model is pre-trained on a large amount of unlabeled text, similar to the steps prior to SFT. Then, human evaluators interact with the model or assess its outputs, providing feedback on its performance, which is used to train a reward model capable of predicting scores that human evaluators might assign. Finally, the original model’s parameters are optimized using the reward model as a reward signal through reinforcement learning methods. In this process, the model attempts to maximize the expected rewards it receives. The advantage of RLHF lies in its ability to help the model learn more complex behaviors, especially when tasks are difficult to define through simple correct or incorrect labels. Additionally, RLHF can help the model better align with human preferences and values.

![]()

Applications of Large Models

Large models, with their vast parameter counts, deep network structures, and extensive pre-training capabilities, can capture complex data patterns and exhibit exceptional performance across multiple domains. They not only understand and generate natural language but also process complex visual and multi-modal information, adapting to various dynamic application scenarios.

Applications in NLP

The application of large models in the NLP domain is particularly widespread. For example, OpenAI’s GPT series models can generate coherent, natural text, applied in chatbots, automated writing, and language translation, with notable products like the well-known ChatGPT. In the fintech sector, large models are often used for risk assessment, trading algorithms, and credit scoring. These models analyze vast amounts of financial data, predict market trends, and assist financial institutions in making better investment decisions. In the legal and compliance fields, they can be used for document review, contract analysis, and case studies. Through NLP technology, models can understand and analyze legal documents, enhancing the efficiency of legal professionals. Recommendation systems are another application area for large models. By serializing user behavior data into text, large models can predict user interests and recommend relevant products, movies, music, and more. In gaming, large models can utilize their coding capabilities to generate complex game environments, driving non-player characters (NPCs) to produce different dialogues based on player settings, thereby providing a more realistic gaming experience.

Applications in Image Understanding and Generation

Current large models possess not only text understanding capabilities but also multi-modal understanding abilities, laying the foundation for their applications in the image domain, such as automatic painting and video generation. These models can mimic an artist’s style to create new artworks, providing assistance to human creativity. For instance, OpenAI’s Sora, released in February 2024, can generate a video that meets user input requirements, offering a more convenient tool for the film production field. In the image processing domain, models like SegGPT are used for image recognition, classification, and generation. By learning from extensive image-text pairs, these models can identify objects, faces, and scenes in images and play roles in medical image analysis, autonomous vehicles, and video surveillance. Additionally, in medicine and biology, multi-modal large models can be used for disease diagnosis, drug discovery, and gene editing, extracting useful information from complex biomedical data to assist doctors in making more accurate diagnoses or helping researchers design new drugs.

Applications in Speech Recognition

Large models also play a vital role in the field of speech recognition. Through deep learning technologies, models can convert speech into text, supporting applications such as voice assistants, real-time speech transcription, and automatic subtitle generation, with mobile voice assistants being a typical example. These models learn from a vast number of speech samples, enabling them to handle different accents, intonations, and noise interference.

Moreover, large models can be applied across various industries, including education, healthcare, agriculture, and finance. For instance, in education, large models can be used for personalized learning, automated grading, and intelligent tutoring, providing customized teaching content based on students’ learning situations, thus helping them learn more efficiently. In summary, large models demonstrate immense potential across various fields through their powerful data processing and learning capabilities. With continuous technological advancements, it is foreseeable that large models will play an increasingly important role in future developments.

![]()

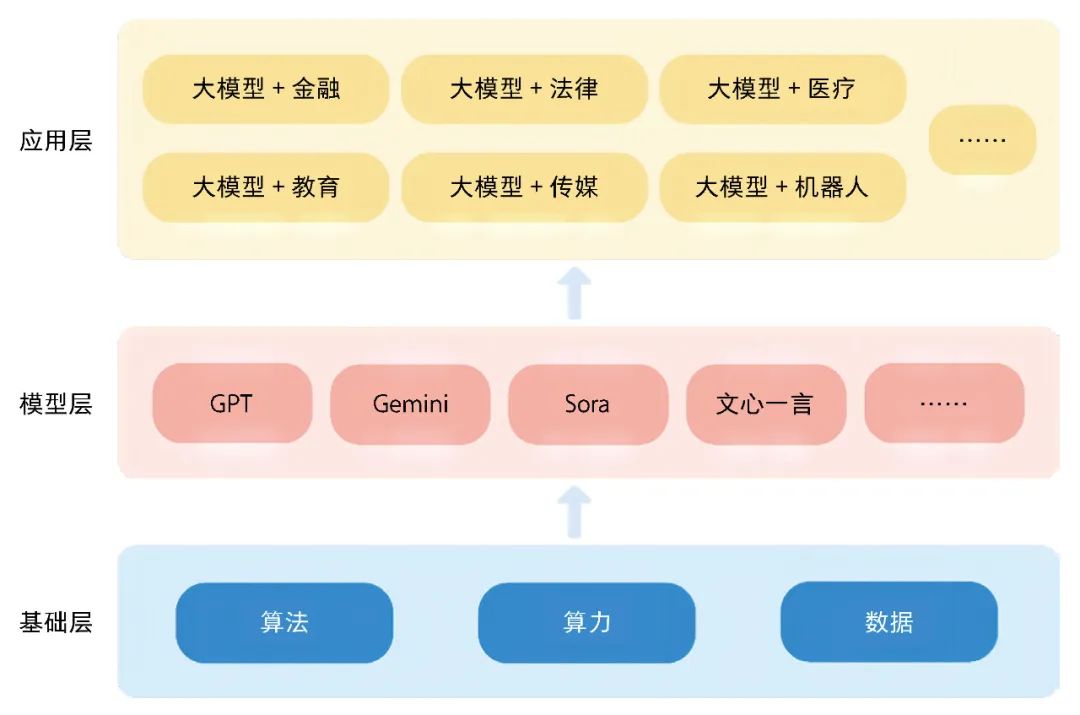

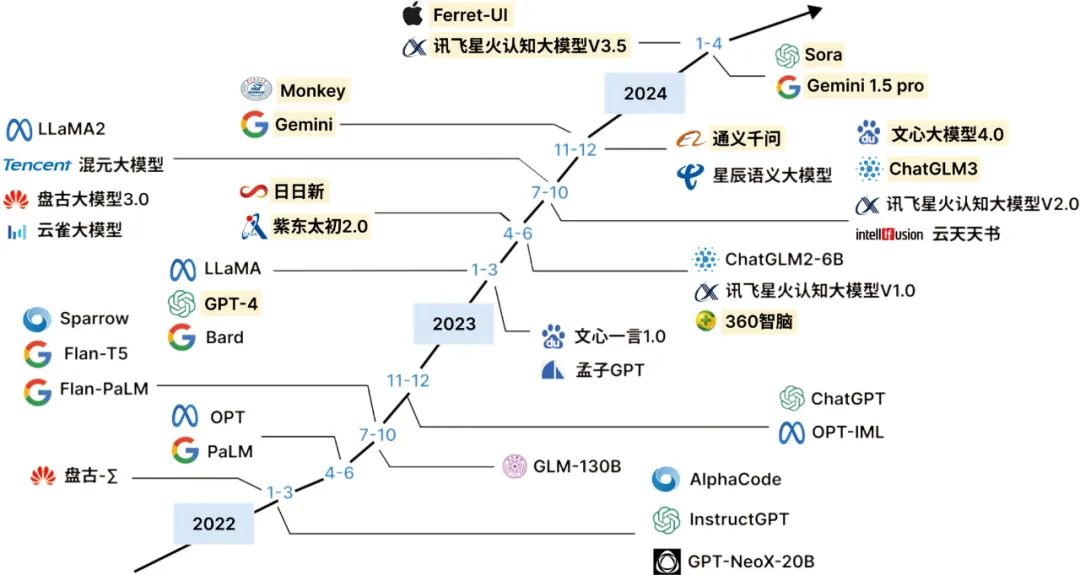

Development of Large Models

In the current AI landscape, large models have become an undeniable trend. With the continuous advancement of deep learning technologies, particularly in NLP and CV fields, large models are driving breakthroughs in cutting-edge technologies with their powerful data processing and pattern recognition capabilities.

The development of large models at the technical level benefits from several key factors. First is algorithmic innovation, especially following the introduction of the Transformer architecture, which has rapidly propelled the development of subsequent models, including BERT, the GPT series, and T5. These models achieve leading performance on various NLP tasks through pre-training and fine-tuning strategies. Second is the enhancement of computational power, particularly advancements in graphics processing units (GPUs) and tensor processing units (TPUs), making it possible to train models with tens of billions or even hundreds of billions of parameters. Additionally, the rise of cloud computing platforms provides the necessary computational resources for training large models. Meanwhile, large-scale datasets offer ample “nutrition” for model training, often containing rich language expressions, scene information, and user interactions, enabling models to capture complex data distributions and linguistic patterns.

The development of large models at the application level has two main directions: large language models and multi-modal large models. In the case of large language models, GPT-3 serves as a milestone, with a parameter count reaching 175 billion, showcasing astonishing language understanding and generation capabilities. Following closely, Meta AI’s LLaMA series models have become favorites in academic research and industry due to their excellent performance and relatively smaller model sizes. These models not only excel in standard NLP tasks but also exhibit tremendous potential in few-shot learning and transfer learning.

Multi-modal large models extend from this foundation, capable of processing and understanding various types of inputs, such as text, images, and audio. OpenAI’s DALL-E and CLIP are representative works in this direction, able to understand and generate images that correspond to textual descriptions or understand text content through images. Google’s SimCLR represents a significant exploration in the CV field, effectively extracting image features through contrastive learning. Subsequently, Google’s Gemini made important strides in the native multi-modal domain, pre-training across different modalities and handling more complex inputs and outputs, such as images and audio. OpenAI’s Sora further broadens the application scope of large models, capable of automatically generating video content based on input text, simulating interactions between characters and environments in both the physical and digital worlds.

The development history of large models is summarized, with highlighted entries representing multi-modal models.

Domestic tech companies are also actively exploring large models. Models such as Baidu’s “Wenxin Yiyan,” Alibaba’s “Tongyi Qianwen,” Huawei’s “Pangu,” and iFlytek’s “iFlytek Spark” have emerged, demonstrating excellent performance not only in general language understanding and generation tasks but also in specialized applications in fields such as healthcare, law, and tourism. For example, Ctrip’s “Ctrip Wenda” focuses on Q&A in the tourism sector, NetEase Youdao’s “Ziyue” is applied in education, and JD Health’s “Jingyi Qianxun” aims to provide medical consultation services.

![]()

Challenges of Large Models

In the field of AI, large models are becoming a hot topic in both academic research and industry due to their powerful processing capabilities and broad application prospects. However, as these models continue to expand, the challenges faced at the research frontier are becoming increasingly complex.

Model Size

The trade-off between model size and data scale has become a significant challenge. While model performance often improves with an increase in parameter count, this growth in scale brings substantial computational costs and high demands for data quality. Researchers are seeking optimal balances between model size and data scale under limited computational resources, while also exploring techniques such as data augmentation, transfer learning, and model compression to reduce model size without sacrificing performance, striving to minimize the operational costs of large models.

Network Architecture

Innovation in network architecture is also crucial. Most existing large models are based on the Transformer architecture. Although the Transformer architecture performs excellently in handling sequential data, its low computational efficiency and parameter utilization issues can lead to waste of computational resources. The limitations of the current Transformer have prompted researchers to design new network architectures aimed at improving efficiency and generalization capabilities through enhancements to attention mechanisms, the introduction of sparsity, and adaptive computation. For instance, the state-space-based model Mamba proposed in December 2023 introduces selection mechanisms that significantly address the computational efficiency issues of existing Transformer architectures, potentially becoming the next generation’s foundational architecture for large models.

Prompt Engineering

In handling imbalanced datasets, prompt learning offers a new paradigm for addressing this issue. By embedding specific prompts in input data, prompt learning helps improve model performance on minority classes. However, how to design effective prompts and determine the robustness of these prompts (effectiveness across different types of large models) has become a discipline—prompt engineering. Further research is needed to combine well-designed prompts with other large model technologies.

Contextual Reasoning

Simultaneously, as model sizes grow, emergent abilities such as contextual reasoning have surfaced, indicating that large models may have internalized cognitive and learning mechanisms closer to human understanding. The nature, triggering conditions, and controllability of these emergent abilities are current research hotspots that require more exploration from cognitive science and neuroscience perspectives to provide reasonable explanations, helping people understand the principles behind these emergent capabilities.

Knowledge Updating

The continuous updating of knowledge is another significant issue faced by large models. As knowledge progresses, the information within models may quickly become outdated. Researchers are exploring methods to enable models to continuously learn and integrate new knowledge while avoiding catastrophic forgetting to keep the model’s knowledge base up-to-date.

Explainability

Despite large models excelling in various NLP and machine learning tasks, as the parameter count increases and network structures deepen, the decision-making processes of models become increasingly difficult to explain. The black-box nature of large models makes it challenging for users to understand how large models process input data and generate output results. This leads to a passive understanding state, where people only know the model’s output results but have no idea why the model made such decisions.

Privacy and Security

The training data for large models may encompass personal identity information, sensitive data, or trade secrets. If these data are not adequately protected, the training process of the model may pose risks of privacy breaches or misuse. Additionally, large models themselves may contain sensitive information, such as memories gained from training on sensitive data, which introduces potential privacy risks.

Data Bias and Misinformation

Large language models may output biased or misleading content, which can stem from various factors such as data collection methods, annotators’ subjective preferences, and socio-cultural influences. When models are trained on biased data, they may incorrectly learn or amplify these biases, leading to unfair or discriminatory outcomes in practical applications.

Addressing these issues is crucial for advancing large model technology and expanding its application range. Solving each challenge could facilitate more effective applications of AI in the real world, bringing profound impacts to human society.

![]()

Future of Large Models

As AI technology continues to evolve and the application scenarios for large model technology expand, future trends in large model technology are emerging with new characteristics and development directions.

Balancing Model Scale and Efficiency

Given that large model technology often requires substantial computational resources and storage space, future development trends will focus on maintaining model scale while improving efficiency to meet practical application needs. Currently, sparse expert models are gaining attention as a new modeling architecture method. Compared to traditional dense models, sparse expert models reduce computational demands by activating only the model parameters relevant to the input data, thereby improving computational efficiency. Google’s sparse expert model GlaM, developed in 2023, has seven times more parameters than GPT-3 but reduces energy consumption during training and the computational resources required for inference, outperforming traditional models on various NLP tasks.

Deep Integration of Knowledge

Knowledge integration aims to enrich a model’s representational and decision-making capabilities by consolidating information from different data sources and knowledge domains. Currently, large models primarily train and apply to single-domain or single-modal data, such as the BERT model in NLP and the ViT model in CV. However, in the real world, text, images, audio, and other forms of information are often interrelated, making it challenging for single-modal information to meet complex scenario demands. Therefore, with the continuous development of CV, speech recognition, and other technologies, future large models will increasingly focus on multi-modal integration, processing data from different modalities to achieve fusion and interaction of multi-modal information. This ability to integrate multi-modal information allows large models to better understand and process complex information. Furthermore, consideration can be given to combining large model technology with external knowledge bases to further enhance the model’s understanding capabilities and application breadth. This means that models can leverage not only their internal language patterns and statistical information but also external structured knowledge for reasoning and decision-making, thereby better addressing complex problems in the real world. Importantly, external knowledge can also enhance the generalization capabilities of large models.

Exploration of Embodied Intelligence

Embodied intelligence refers to an intelligent system that perceives and acts based on a physical body, acquiring information, understanding problems, making decisions, and executing actions through interactions with the environment, thereby generating intelligent behavior. The proliferation of large models has significantly accelerated the research and implementation of embodied intelligence. Large language models are becoming key tools to help robots better understand and apply advanced semantic knowledge. By automating task analysis and breaking them down into specific actions, large model technology makes interactions between robots and humans, as well as physical environments, more natural, thereby enhancing the intelligent performance of robots. For instance, different tasks can be achieved through different large models. By utilizing large language models for learning dialogue, visual models for map recognition, and multi-modal models for completing physical actions, robots can learn concepts more efficiently and direct actions, while decomposing all instructions for execution, achieving automated scheduling and collaboration through large model technology. This comprehensive utilization of different models presents new opportunities and challenges for the intelligent development of robots.

Explainability and Trustworthiness

As model scales increase, their internal structures become increasingly complex, making the explainability and trustworthiness of models focal points of concern. First, to enhance model explainability, researchers will strive to develop new methods and technologies that enable large models to clearly explain their decision-making processes and the basis for generated results. This may involve introducing more transparent model structures, such as transparent neural networks or interpretable attention mechanisms, as well as developing explanatory algorithms and tools to help users understand model outputs.

Second, to enhance model trustworthiness, a series of measures will be taken to reduce the likelihood of models generating errors or misleading information. One important direction is to introduce external information sources and equip models with the ability to access and reference these sources. This way, models will be able to access the most accurate and up-to-date information, thereby improving the accuracy and credibility of their output results.

Simultaneously, to increase transparency and trust, models will also provide citations related to external information sources, allowing users to audit these sources and determine the reliability of the information. Notably, while some large models with external information access and citation capabilities have emerged, such as Google’s REALM and Facebook’s RAG, this is merely the beginning of development in this field. More innovations and advancements are expected in the future. For example, new models like OpenAI’s WebGPT and DeepMind’s Sparrow will further propel development in this area, laying a more solid foundation for the future applications of large model technology. The future development of large model technology will place greater emphasis on explainability and trustworthiness, which is not only an inevitable trend in technological development but also a reasonable requirement from society for technological applications. Only by continuously enhancing the explainability and trustworthiness of models can large model technology be better applied across various fields, bringing greater momentum for the development of human society.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.